Discussion

Farhad Rahimi

Researcher

Prompt Engineering

Prompt engineering is a relatively new discipline for developing and optimizing prompts to efficiently use language models (LMs) for a wide variety of applications and research topics. Prompt engineering skills help to better understand the capabilities and limitations of large language models (LLMs).Researchers use prompt engineering to improve the capacity of LLMs on a wide range of common and complex tasks such as question answering and arithmetic reasoning. Developers use prompt engineering to design robust and effective prompting techniques that interface with LLMs and other tools.

Farhad Rahimi

Researcher

Zero-Shot, One-shot and Few-Shot Prompting

Zero-Shot Prompting:

refers to a machine learning model's ability to perform a task it has not been explicitly trained on, without being shown any examples of that specific task during training. Zero-shot prompting means that the prompt used to interact with the model won't contain examples or demonstrations. The zero-shot prompt directly instructs the model to perform a task without any additional examples to steer it.

One-shot Prompting:

A one-shot prompt is a technique where a single example of a task is provided to an AI model to guide its response. It consists of an instruction and a single input-output pair, which helps the model understand the desired format and complete a similar new task. This is more effective than a zero-shot prompt (no examples) for tasks requiring a specific output style or format

Few-Shot Prompting:

Few-shot prompting can be used as a technique to enable in-context learning where we provide demonstrations in the prompt to steer the model to better performance. The demonstrations serve as conditioning for subsequent examples where we would like the model to generate a response.

By comparing zero shot, one shot, and few shot inferences, you will take the first step towards prompt engineering and see how it can enhance the generative output of Large Language Models.

Code Sample in github:

https://github.com/farhadrahimiinfo/Natural_Language_Processing/blob/main/02_summarize_dialogue.ipynb

Farhad Rahimi

Researcher

Meta Prompting

Meta Prompting is an advanced prompting technique that focuses on the structural and syntactical aspects of tasks and problems rather than their specific content details. This goal with meta prompting is to construct a more abstract, structured way of interacting with large language models (LLMs), emphasizing the form and pattern of information over traditional content-centric methods.

Key Characteristics

According to Zhang et al. (2024), the key characteristics of meta prompting can be summarized as follows:

1. Structure-oriented: Prioritizes the format and pattern of problems and solutions over specific content.

2. Syntax-focused: Uses syntax as a guiding template for the expected response or solution.

3. Abstract examples: Employs abstracted examples as frameworks, illustrating the structure of problems and solutions without focusing on specific details.

4. Versatile: Applicable across various domains, capable of providing structured responses to a wide range of problems.

5. Categorical approach: Draws from type theory to emphasize the categorization and logical arrangement of components in a prompt.

Ref : https://www.promptingguide.ai/techniques/prompt_chaining

Farhad Rahimi

Researcher

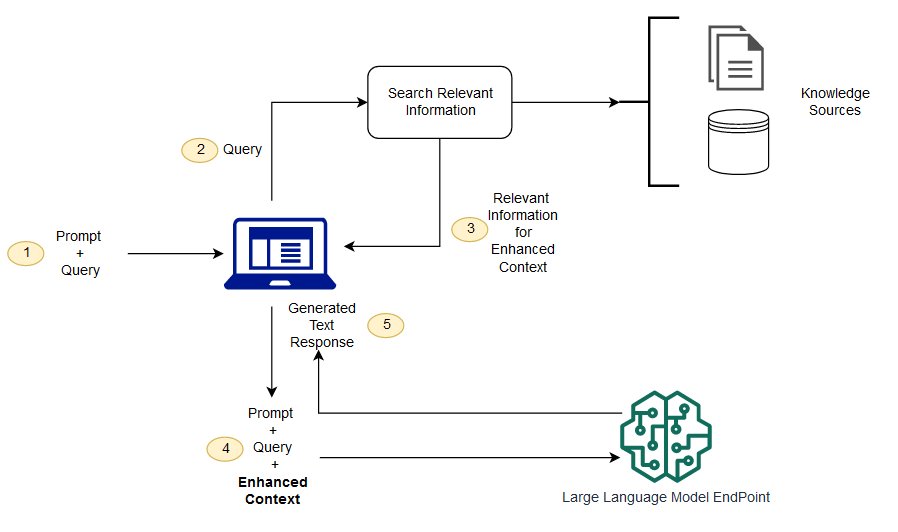

Retrieval Augmented Generation (RAG)

Retrieval-Augmented Generation (RAG) is the process of optimizing the output of a large language model, so it references an authoritative knowledge base outside of its training data sources before generating a response. Large Language Models (LLMs) are trained on vast volumes of data and use billions of parameters to generate original output for tasks like answering questions, translating languages, and completing sentences. RAG extends the already powerful capabilities of LLMs to specific domains or an organization's internal knowledge base, all without the need to retrain the model. It is a cost-effective approach to improving LLM output so it remains relevant, accurate, and useful in various contexts.

Ref: https://aws.amazon.com/what-is/retrieval-augmented-generation/

RAG combines an information retrieval component with a text generator model. RAG can be fine-tuned and its internal knowledge can be modified in an efficient manner and without needing retraining of the entire model.

RAG takes an input and retrieves a set of relevant/supporting documents given a source (e.g., Wikipedia). The documents are concatenated as context with the original input prompt and fed to the text generator which produces the final output. This makes RAG adaptive for situations where facts could evolve over time. This is very useful as LLMs's parametric knowledge is static. RAG allows language models to bypass retraining, enabling access to the latest information for generating reliable outputs via retrieval-based generation.

Ref: https://www.promptingguide.ai/techniques/rag

The following diagram shows the conceptual flow of using RAG with LLMs.